Xfiles a Factor in Icinga2 Graphite Graphs

It seems I celebrated a little too soon after figuring out how to add extra archives into the whisper DB files behind graphite for icinga2.

This blog post is a bit of a ramble and may not make 100% sense if you didn’t read my other icinga2 graphite post first. Check that out here.

The flaw in my plan wasn’t apparent because I was only looking at the output of the ping4 plug-in in my testing, whereas I should have been checking some other graphs as well.

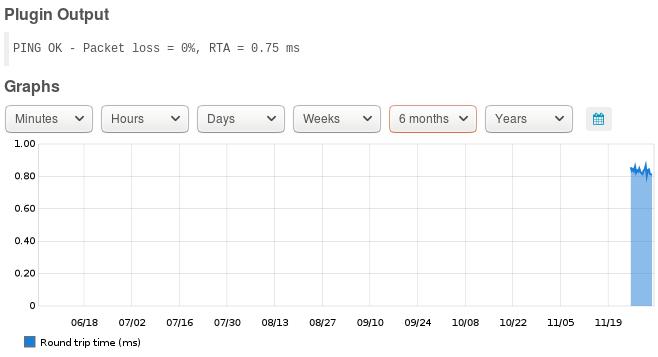

Here we have a ping graph for five days of history on a server at work and it’s clearly working better than before as I only had one day of history originally.

This five day graph represents data in Archive 1 of the whisper file. In this particular instance I’m keeping 10 minute increments for a week which is different from my example in my first blog but the end result is essentially the same.

The six month view gives us a look at the data from Archive 2, which is set to 30 minute increments for a year and gave me a false sense of success up till the point I looked at some other services graphite was presenting into icingaweb2.

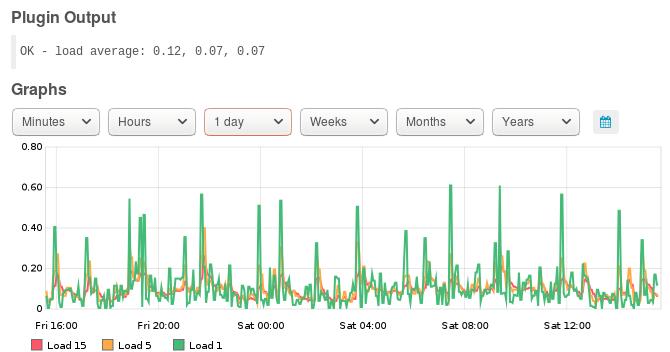

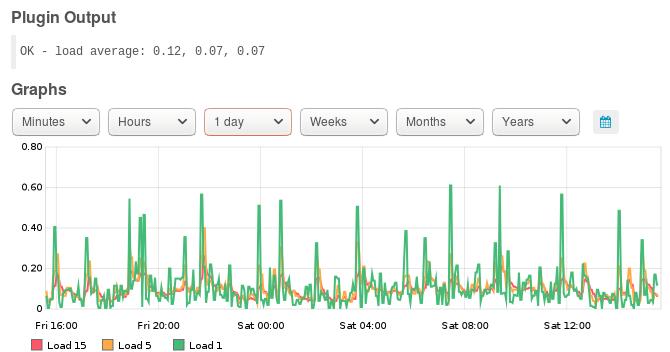

Load for 1 day is looking good and represents the one minute resolution records for a day in Archive 0, but when we view a graph that requires either of the other two archives the date range is clearly supported but the data is somewhat lacking.

Delve into the details

I dug a little deeper into the world of .wsp files to get an understanding of what was happening and rather than using a shovel for the excavation I used the whisper-dump utility.

Whisper-dump does what it says on the label and dumps all of the data in a .wsp file out in a relatively human readable format.

The dump output consists of the header information which I extracted with whisper-info in my first post and then a raw dump of the data for as many archives as you have created in the file.

1Meta data:

2 aggregation method: average

3 max retention: 31536000

4 xFilesFactor: 0.5

5

6Archive 0 info:

7 offset: 52

8 seconds per point: 60

9 points: 1440

10 retention: 86400

11 size: 17280

12

13Archive 1 info:

14 offset: 17332

15 seconds per point: 600

16 points: 1008

17 retention: 604800

18 size: 12096

19

20Archive 2 info:

21 offset: 29428

22 seconds per point: 1800

23 points: 17520

24 retention: 31536000

25 size: 210240

26

27Archive 0 data:

280: 1543097400, 0.0015430000000000001006417171822704404

291: 1543443060, 0.0016889999999999999631683511580604318

302: 1543097520, 0.0016540000000000000882072193064686871

313: 1543443180, 0.00154600000000000006514233596988106

32....... (1440 lines of data)

33

34Archive 1 data:

350: 1543104000, 0.0016808000000000003059524855686390765

361: 1543104600, 0.0018087999999999999477945378245635766

372: 1543105200, 0.001638599999999999822300478236059007

383: 1543105800, 0.0016849999999999996491001352794114609

39........ (1008 lines of data)

40

41Archive 2 data:

420: 1543104000, 0.0017094000000000000253491672097538867

431: 1543105800, 0.0018563333333333330993353271765045065

442: 1543107600, 0.0016944000000000002028460732717007886

453: 1543109400, 0.0018723333333333334882464527026968426

46........ (17520 lines of data)

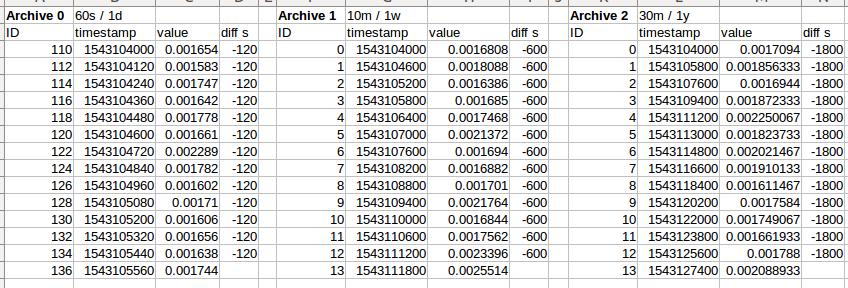

The header confirms the archive structure we created using whisper-resize in the first blog post and the rest of the output is about 20,000 lines of text which I’ve cropped down for the sake of readability.

For Archive 0 there are 1,440 rows, which is the number of minutes in 24 hours. Similarly Archive 1 has 1008 lines for 10 minute resolution across a week and Archive 2 is 17,520 lines giving 30 minute resolution over a year.

The data points are recorded as a record ID, Unix epoch time stamp and then the data value which in this case is ping return time in seconds.

Unix epoch time stamps are the number of seconds since 00:00:00 on January 1st, 1970 UTC for those not familiar with the dark arts.

Looking at that data you might have quickly realised that the ID column and timestamps are not in sync for Archive 0 which is because the whisper file writing “wraps around ” after a day and we don’t have one point of data per record. More on that later.

Archive 1 and 2 are sequential though as they have not wrapped yet and there is always a data point for them as they are an aggregate of Archive 0 and 1 respectively. In this case the average.

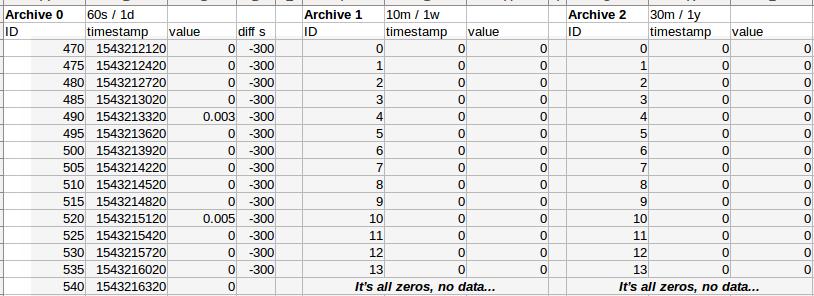

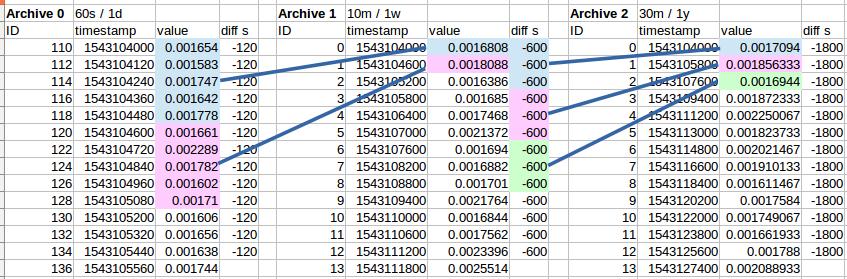

To make slightly more sense of the raw data I imported it into LibreOffice Calc and sorted by the time stamp.

If you’re really quick at maths you’ll notice that the Archive 0 data points are actually 120 second apart but for clarity I added the “diff s” column.

This is because I’m only pinging things once every two minutes. Other than that little anomaly everything is good with Archive 1 and 2 showing average values so it still wasn’t obvious what was broken just yet.

Eureka!

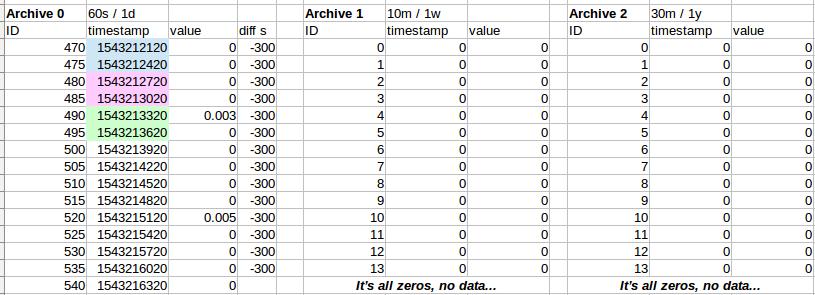

Once I’d done the same import and conversion with the load service raw data and I had a eureka moment but only because I’d just spent more time reading the graphite documentation than I’m prepared to admit in polite company.

The data points are 300 seconds apart which makes sense as I’m only checking processor load every five minutes. If I were to optimise the whisper files for every service I’d probably use whisper-resize and carbon-cache storage settings to set all of the load files to 5 minute resolution in Archive 0.

The reality of that is that the time/cost benefit for me while rolling out icinga2 is not really there and even at work with 20,000 + graphed items in graphite / icinga2 so far it’s only a few GB of disk space being consumed.

What went wrong?

So at this point we need to refer back to the graphite, or more specifically carbon-cache documentation. The value of the xFilesFactor setting in the .wsp is where we need to be looking.

"xFilesFactor should be a floating point number between 0 and 1, and specifies what fraction of the previous retention level's slots must have non-null values in order to aggregate to a non-null value. The default is 0.5."

ref: https://graphite.readthedocs.io/en/latest/config-carbon.html

What that means is if you want the aggregation of data to flow to the lower resolution archives in your whisper file by default you need to have 50% coverage of possible data points. In this example that means five data points out of the ten possible for each 10 minute slot to be averaged out and land in Archive 1.

This worked out on ping4 because the resolution of the Archive 0 was one minute and I was doing the ping every two minuets for 50% coverage.

To state it a different way: If I’d been pinging every 3 minutes I’d only have 30% coverage and the aggregation would not have worked. Pinging every minute would give 100% coverage.

This was an accident though, as at that stage I had read just enough of the graphite documentation to make it work and having precisely 50% was good luck, not good management.

For load however I’m not even close to the default 50% when you look at the available data for aggregation down to the ten minute resolution of Archive 1 there are only two of the possible ten points available which is 20%.

Fixing things up

There’s two possible ways you can fix this problem; create individual rules in /etc/carbon-cache/storage-schemas.conf for each metric you keep in graphite / icinga2 to match your polling periods, or just hit it with a sledgehammer as I did and set xFilesFactor = 0 for everything.

If you had a small icinga2 installation you might consider polling everything every minute as well if all of the hosts were on one LAN and performance was not a concern.

Even with the modest config I run for my home office deployment I’m how over 800 services monitored on 38 hosts. There’s even two remote monitoring zones across VPN’s to AWS and Linode.

Many of those monitored services are things like backup completion which you only need to check on once an hour at most. Other things like available patches, SSL certificate expiration only needs to he checked once a day, maybe less.

On a larger deployment you’ll quickly have thousands of monitored services. The processor load and network bandwidth consumed to monitor things every minute can becomes a major issue.

So, with that in mind I kept my mix of monitoring periods and just changed all of my whisper xFilesFactors to be 0. This means that you only need one data point per period in Archive 1 in Archive 0 for the aggregation to work.

To make this change I modified my default rule for new whisper files in /etc/carbon-cache/storage-schemas.conf to be:

1[default]

2pattern = .*

3xFilesFactor = 0

4retentions=60s:1d,5m:1w,15m:1y

And then re-ran a slightly updated bit of find-foo to update the existing files and ran it across the server again.

1cd /var/lib/graphite/whisper/icinga2

2find . -name *wsp -exec whisper-resize --xFilesFactor=0 '{}' 60s:1d 5m:1w 15m:1y \;

3chown -R _graphite:_graphite *

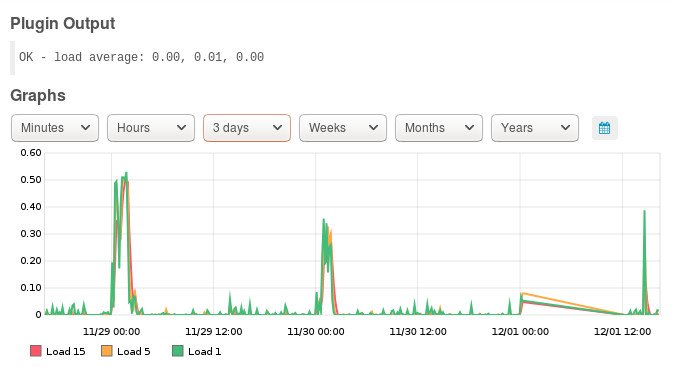

After a restart of carbon-cache and waiting a couple of days I now have Archives populating and graphs graphing.

In typical software-demo style there is a big glitch in that graph. That was caused by a power cut last night for longer than my home UPS could hold the hypervisor up.

The graph however, is now a thing of beauty.

I’ve updated my original blog post to include references to xFilesFactor, so if you use the example settings from there you’ll be fine as well. Post a comment below if you find any glaring errors or found this useful.